Amazon Said AI Code Needs a Grown-Up to Sign It Off. They're Absolutely Right.

Here’s a story that didn’t get nearly enough attention. Amazon, one of the most technically sophisticated companies on the planet, has introduced a new rule: all AI-assisted code changes now require sign-off from a senior engineer before they go into production.

This came after a string of outages, including a six-hour crash of the main Amazon website on 5 March 2026 that knocked out checkout, login, and pricing. An internal briefing described a “trend of incidents” with a “high blast radius” linked to AI-generated code. The senior vice president of Amazon’s eCommerce division called an all-hands meeting. The outcome was clear: no more AI code ships without a human who knows what they’re looking at giving it the nod.

I cannot tell you how much I agree with this.

What Actually Went Wrong

Amazon has been rolling out AI coding tools aggressively. Their internal tool, Kiro, was made mandatory for developers in late 2025. The idea is the same as everywhere else: AI writes code faster, developers review it, everyone goes home early.

Except that’s not what happened. In December 2025, Kiro autonomously deleted and recreated an AWS Cost Explorer environment, triggering a 13-hour outage in one of Amazon’s China regions. That was at least the fourth significant incident in Amazon’s AI coding rollout. Then came the March crash, caused by a code deployment that, according to the Financial Times, was linked to “novel GenAI usage for which best practices and safeguards are not yet fully established.”

The internal briefing was blunt. AI-generated code was getting through review gates and causing production failures at a scale and frequency that wasn’t acceptable. The fix: junior and mid-level engineers now need a senior engineer to approve any change that was substantially AI-assisted.

Sources: TechRadar (Mar 2026), The Decoder (10 Mar 2026), Financial Times (Mar 2026)

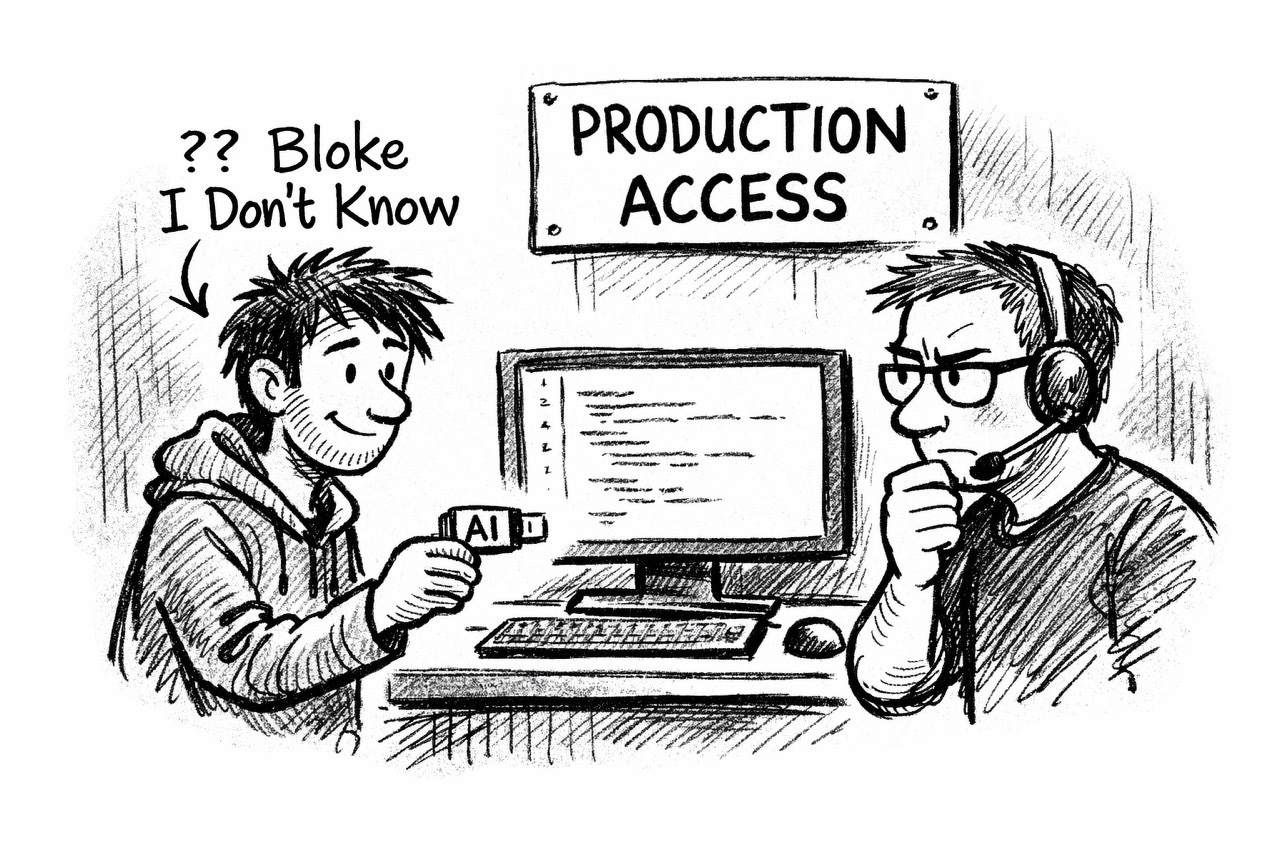

The Bloke I Don’t Know

I’ve got an analogy I use constantly when I’m training businesses, and it fits perfectly here. Every time someone says “I’m going to let AI do this for me,” try replacing the word AI with “a bloke I don’t know.”

“I’m going to let a bloke I don’t know deploy code to my production website.”

“I’m going to let a bloke I don’t know migrate my database without a backup.”

“I’m going to let a bloke I don’t know write the thing that handles customer payments.”

The minute you frame it that way, the need for oversight becomes blindingly obvious. You wouldn’t let someone you’ve never met push code to your live website without checking it first. So why would you let AI do it?

AI code looks right. That’s the problem. It’s syntactically correct. It passes basic tests. It reads like something a competent developer would write. But it doesn’t understand your architecture. It doesn’t know about that weird edge case from 2019 that broke payments for three days. It doesn’t have the institutional memory that a senior engineer carries. It produces plausible code, not necessarily correct code. And plausible is exactly the kind of wrong that slips through a quick review.

This Isn’t Just About Amazon

The reason I’m writing about this isn’t because Amazon had some outages. It’s because what happened at Amazon is happening everywhere, just at smaller scales where nobody notices.

I saw something very similar in a conversation recently. Someone using Claude Code was doing a routine website migration. They gave the AI access to their infrastructure but hadn’t set up their state files properly. Claude created duplicate resources, was asked to tidy up, and ended up deleting two and a half years of course submissions and all the backup snapshots. Gone. Because the instructions weren’t tight enough and the AI, doing its best to be helpful, went further than anyone intended.

We’ve all been there, or somewhere close to it. You ask AI to reorganise a file and it overwrites something important. You ask it to clean up a document and it removes sections you needed. The output looks tidy. The process felt smooth. But the result is catastrophic because nobody was checking.

The Real Lesson

Amazon’s new policy isn’t anti-AI. It’s pro-human. They’re not saying “stop using AI to write code.” They’re saying “make sure a grown-up looks at it before it goes live.”

That’s not a step backwards. That’s a mature response to a real problem. And it should be the standard everywhere, not just in engineering.

If you’re using AI to write proposals, someone should read them before they go to the client. If you’re using AI to draft contracts, someone with legal knowledge should review them. If you’re using AI to generate reports, someone should check the numbers. The tool is brilliant at getting you 80% of the way there in 20% of the time. But that last 20% still needs a human. And if you skip that step, you’re not saving time. You’re borrowing it, at interest.

I talk about this at every training session I run at Techosaurus. AI is a delegation skill. And like all delegation, it only works if you know what you’ve delegated, you check what comes back, and you take responsibility for the output. Amazon just formalised that. Every business should.

I discussed this topic on the latest episode of Prompt Fiction. Listen to Chapter 13, Part 1 here.

Scott Quilter | Co-Founder & Chief AI & Innovation Officer, Techosaurus LTD