The Goldfish Effect: Why AI's Biggest Risk Isn't Job Losses, It's Burnout

Everyone’s talking about AI replacing jobs. Almost nobody is talking about what it’s doing to the people who use it well.

I’ve been training people to use AI for over two years now. I’ve delivered thousands of hours of sessions across businesses of all sizes. And the thing I didn’t see coming, the one I should have spotted earlier, is this: the people who are best at using AI are the ones most at risk of burning out because of it.

Not because AI is broken. Because it works too well.

The Goldfish Effect

I was having a conversation this week with Chris Manley from Traction Consulting, and he nailed it with an analogy that I haven’t been able to shake. Give a goldfish a bigger tank, and it grows to fill it. Give a hard worker an AI toolkit, and their workload expands to match.

That’s exactly what’s happening. The promise of AI was that it would give people time back. That you’d do the same work in half the hours and spend the rest of the afternoon doing whatever you wanted. And for some people, maybe that’s true. But for the high-performers, the grafters, the people who’ve always run at a hundred miles an hour? They didn’t take the time back. They filled it with more work. Then they found a third thing to add on top of that.

Chris drew from a conversation with Amy at Empower Ag, who works with farmers and agricultural businesses. She’s seeing the same pattern in a completely different sector. When farmers gain efficiency through technology, they don’t take the spare time. They make the wheel go faster. They fill the gap with more graft. It’s not a productivity strategy. It’s a personality type. And it’s the same personality type that thrives in entrepreneurship, consulting, and leadership.

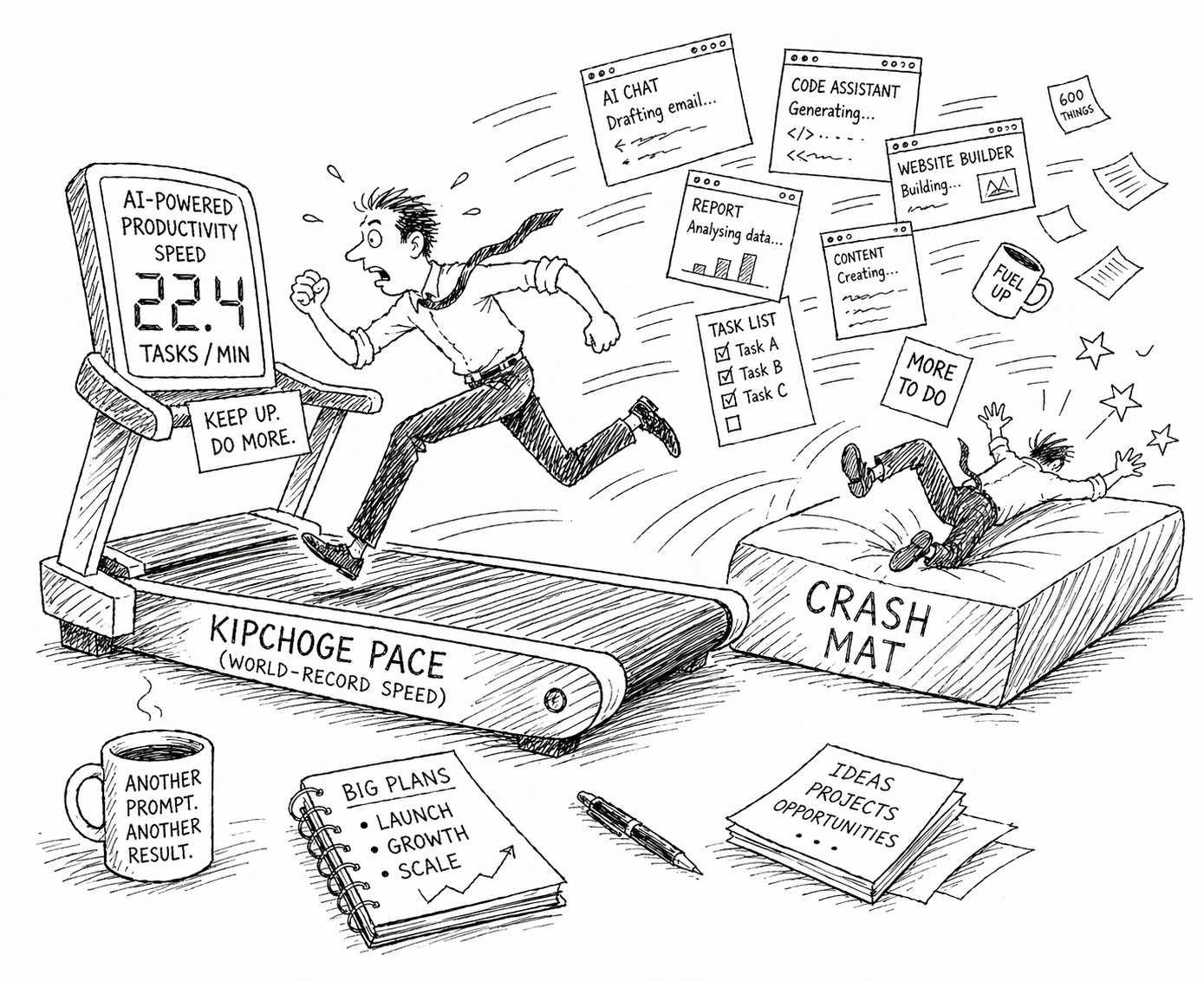

The Kipchoge Treadmill

Chris also gave me the image that I think captures this better than anything else I’ve heard. At some marathons, they set up a treadmill running at Eliud Kipchoge’s world-record pace and invite members of the public to try it. People jump on, full of confidence. Within seconds, they’re flying off the back and landing on a crash mat.

That’s what AI-enabled productivity looks like for a lot of people right now. The pace feels sustainable in the moment. You’re orchestrating three different AI sessions, drafting an email here, building a website there, coding something else over there. You’re producing at a rate that would have been unthinkable two years ago. And then one evening you stop, walk bleary-eyed into the bedroom, and realise your brain is racing so fast you can’t sleep.

I know this because I’ve lived it. I’m not going to pretend otherwise. There have been evenings where I’ve gone straight from a multi-threaded AI session to bed and just laid there, wired, unable to switch off. Not because the work was stressful. Because the pace was exhilarating, and my brain hadn’t got the memo that we’d stopped.

This Isn’t Traditional Burnout

What makes this different from the burnout we’ve always talked about is that it’s structurally different. It’s not caused by bad management or unreasonable deadlines. It’s self-imposed, and it’s driven by three things that AI specifically amplifies.

First, AI removes the friction that used to create natural breaks. There’s no waiting for a colleague to review something. No lag while a report generates. No bottleneck where you’d normally grab a coffee and let your brain decompress. The breathing space has been engineered out, and most people haven’t noticed it’s gone.

Second, the reward loop is immediate. Every completed task delivers a micro-hit of achievement. AI compresses the time between starting and finishing something, which means the dopamine cycle accelerates. You do more, faster, and the feeling of accomplishment compounds until you crash.

Third, and this is the one that gets me, the off switch disappears. When you know your AI toolkit is sitting there ready to go, rest starts to feel like waste. You could be producing. You could be prompting. Three agents are sitting idle and you feel guilty about it. The tool creates its own sense of urgency, even when there’s no deadline anywhere near you.

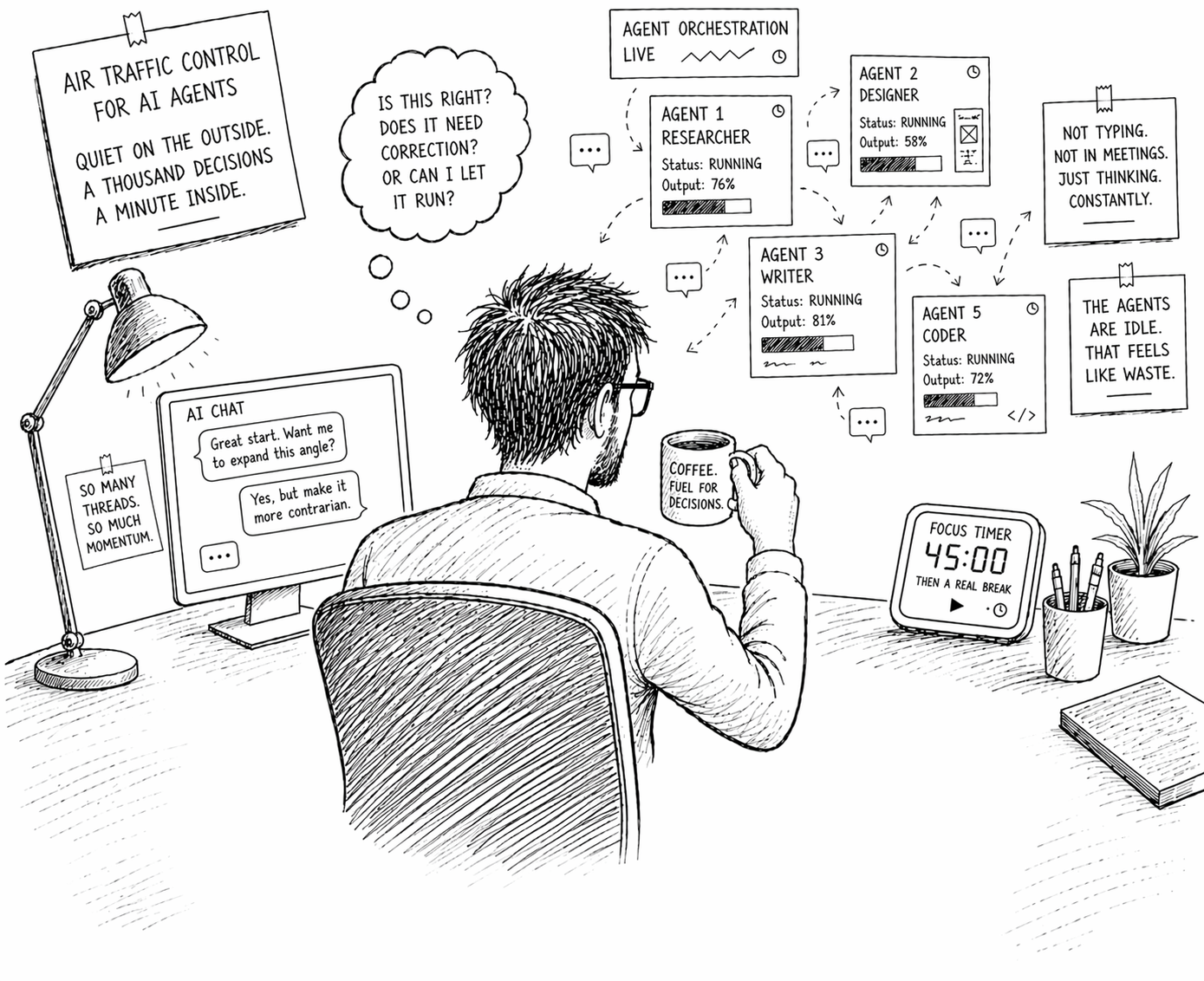

An Old Colleague Put It Better Than I Could

A former colleague of mine, Steve Clements, published a piece the other week called “The New Rhythm” that hit me like a freight train. He described the experience of orchestrating multiple AI agents as something that doesn’t feel like coding and doesn’t feel like managing. It feels like something new that doesn’t have a name yet. He compared it to air traffic control: you’re not typing much, you’re not in meetings, you might be sitting quietly with a coffee, but your brain is making a constant stream of micro-decisions about whether each output is right, whether it needs correction, or whether you can let it run.

That resonated so deeply because it’s exactly what my working day looks like. And the bit that really landed was his observation about guilt: the strange, specific guilt of having nothing running. If the agents are idle, you feel like you should be feeding them. Steve referenced a UC Berkeley study published in the Harvard Business Review that tracked 200 employees over eight months and found that nobody was being forced to overwork. People voluntarily expanded their workload because AI made “doing more” feel possible. The burnout wasn’t imposed. It was self-inflicted.

That study should be required reading for anyone leading a team that uses AI. The researchers found three distinct patterns: people taking on work that previously belonged to someone else, work seeping into moments that used to be natural pauses, and workers keeping multiple threads alive simultaneously. Sound familiar? It should. That’s what every productive AI user I know does every single day.

Sources: Harvard Business Review (9 Feb 2026), UC Berkeley Haas Newsroom (19 Feb 2026), Cenit (25 Mar 2026)

Efficiency Is Not the Same as Effectiveness

Chris made another distinction in our conversation that I think is critical. Being highly efficient, completing a high volume of tasks in a short amount of time, is not the same thing as being highly effective. The danger of AI-augmented multi-tasking is that you can become extraordinarily efficient at doing things that shouldn’t be done at all.

The strategic question should never be “how fast can we execute?” It should be “are we executing the right plan?” And when the tool makes execution feel instant, it’s dangerously easy to skip that second question entirely.

I see this in training sessions. People get excited about the speed. They start producing at a rate they’ve never experienced before. And the first thing they want to do is produce more, not think about whether what they’re producing is the right thing. The velocity is intoxicating. The strategy gets left behind.

It Turns Up the Volume on Whatever’s Already There

I shared a draft of this piece with Shane Evans, a self-leadership coach and Techosaurus Associate I work with, and his response was honest enough to quote: “I’ve felt it myself. You get on a roll with AI, things are flying, and before you know it your head’s still going when your body’s done. It doesn’t feel like stress. It feels like momentum. But it catches up with you.”

Shane’s observation, and it’s one I think is really important, is that AI doesn’t create new behaviours. It amplifies the ones that are already there. If you’ve got no boundaries, AI will expose that fast. If you’ve got a bit of self-awareness and control, it becomes a brilliant tool rather than something that runs you. The technology isn’t the problem. What you bring to it is what determines whether it helps you or hurts you.

He mapped this against his self-leadership model, and it clicked into place in a way that I think makes the whole thing more actionable.

It starts with self-awareness. Noticing when you’ve tipped from productive into compulsive. Catching those moments where you’re opening another tab or spinning up another task without even thinking about why. It also means knowing your strengths, understanding how you naturally work, and recognising how AI amplifies that. Because it will amplify whatever you give it, the good habits and the bad ones.

Then there’s mindset. This is about challenging the assumption that more equals better. Just because you can do more doesn’t mean you should. It means letting go of the guilt around stopping, and genuinely believing that rest is part of doing good work, not time wasted. That sounds obvious written down. In practice, when three AI sessions are sitting idle and you know you could be feeding them, it’s one of the hardest things to actually do.

After that comes action. Putting some structure around how you use the tools. Deciding what matters before you open AI, not after. Limiting how many things you run at once. Building in proper stops. Creating space to think rather than constantly produce. The Berkeley researchers called this an “AI practice,” and the principle is the same: you need intentional boundaries because the tool won’t create them for you.

And finally, impact. Better decisions. Better energy. Better conversations. Better work. And you don’t burn yourself into the ground trying to keep up with a pace that never ends. The irony is that the people who build in the stops often end up producing better output than the ones who don’t, because their thinking is clearer, their judgement is sharper, and they’re actually choosing what to work on rather than just working on everything.

If You Teach AI, You Have to Teach This Too

This is where I think the responsibility sits, and it sits with people like me.

If you teach a room full of high-performers how to use AI without discussing the burnout risk, you’re handing them a tool that will accelerate them towards a wall. That’s not responsible training. That’s lighting the fuse and leaving the room.

At Techosaurus, I’ve started building this into how I deliver AI sessions. Not as an afterthought or a footnote, but as a genuine part of the conversation. AI is both a blessing and a curse, and if we’re honest about that duality, people can make informed choices about how they use it. If we pretend it’s all upside, we’re setting people up to fail.

The Berkeley researchers suggested that the new AI-enabled workday might need to be structured around three to four hours of high-intensity orchestration work, with the rest dedicated to conversations, thinking, planning, and the human work that doesn’t happen inside a chat window. Steve made a similar point in his piece. I think they’re both right.

The people who will thrive with AI aren’t the ones who work the longest hours with it. They’re the ones who design their workflow deliberately, who build their own guardrails, and who know when the most productive thing they can do is close the laptop and go for a walk.

The Tool Will Never Tell You to Stop

That’s the line I keep coming back to. AI will never tell you to stop. There is always another prompt, another task you could run in parallel, another agent you could spin up. The pace is infinite if you let it be.

So you have to build the stopping points yourself. For me, that means structured decompression time between working and sleeping. It means recognising when I’ve crossed from productive into compulsive. It means being honest with myself about the fact that just because I can do something doesn’t mean I should.

It’s a conversation I’ll be having a lot more of. With the businesses I train, with the people I work alongside, and if I’m being truthful, with myself.

Because the goldfish will always grow to fill the tank. The only question is whether you’re the one choosing the size of the tank, or whether you’ve let the tool choose it for you.

Scott Quilter, FBCS | Co-Founder & Chief AI & Innovation Officer, Techosaurus LTD