The Mel Robbins Copilot post got it wrong on three counts

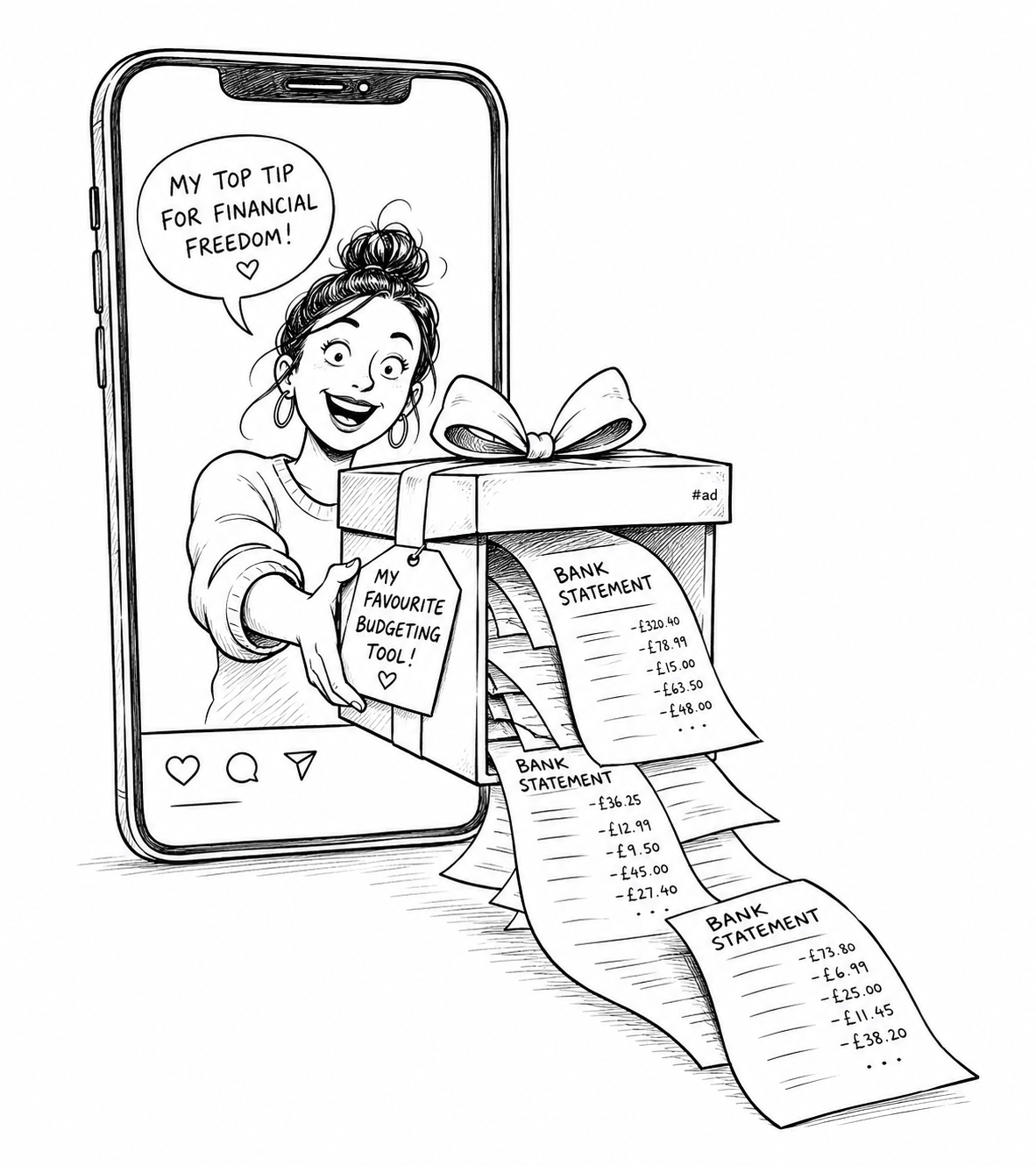

A self-help podcaster with 12 million Instagram followers told women to upload their bank statements, debt statements, bills and income to Microsoft Copilot last week. The disclosure that this was a paid promotion sat in a hashtag at the bottom of the caption. The internet noticed.

Mel Robbins' post on 2 May was framed as practical money advice for women who, in her words, are at risk of falling behind on AI. By the time the criticism settled, three things were clear. There was a paid sponsorship most casual viewers wouldn’t register. There was a privacy briefing missing from the script. And there was a tired old narrative about women and technology being trotted out as if no one had bothered to check the data lately.

Each of those is worth a moment.

Look at who’s paying before you take the advice

When something this confident lands on your feed, the first question to ask is who’s paying for it. Robbins' post carried the #copilotpartner tag and made clear it was brought to you by Microsoft Copilot, the same product she was urging her followers to fill with their financial paperwork.

That doesn’t make her wrong by default. But it should change how you read what she said. This is advertising in a wellness wrapper. The word for that stays the same whether it arrives in a TV ad break or as a friendly Instagram caption from someone you’ve come to trust.

The thing that gets me is how often the disclosure becomes the smallest thing on the page. A hashtag tucked into a caption isn’t a meaningful warning. Most people scroll past it. They hear “use this to feel better about your money” and that’s the message that lands.

This is why the line between content and commerce matters. Once you blur it, every piece of advice becomes negotiable.

A little knowledge can do a lot of damage

The more practical problem is what was actually in the prompt. Followers were told to share documents like bank statements, debt statements, bills and income information to help the assistant build a picture of their money. That’s the entire shape of someone’s financial life, packaged neatly and posted to a consumer chat tool.

This is where it gets nuanced, and the nuance is missing from a lot of the panic. Microsoft says files uploaded to Copilot are stored for up to 18 months and then deleted. The company also says that prompts inside its enterprise Microsoft 365 Copilot product aren’t used to train its foundation models. That’s the safer end of the spectrum.

The consumer side is murkier. Microsoft can train its models on conversation activity by default, with users left to opt out. Most users won’t know that toggle exists and won’t go looking for it. So the sweeping claim that “everything you upload becomes training data” overstates the case, and the reassurance that “it’s all safe” understates it. Both are wrong.

The bigger risk is exposure. Your data sits in your account. Your account is one credential away from someone else. Tools change their terms, and free or low-cost consumer products especially love to revisit those terms quietly. Once you’ve handed over a clear picture of your debts, your direct debits and your salary, you’ve created another place that picture can leak from.

There’s a useful comparison. You wouldn’t email your bank statements to a contact form on a website you’d never used. You wouldn’t print them off and leave them on a cafe table. The instinct to be careful in those situations is the same instinct people are quietly switching off when a friendly voice on Instagram says “just paste it in.”

If you want to use AI on your money, anonymise things first. Strip the account numbers, sort codes and reference numbers. Summarise your spending categories. Choose tools that are designed for confidential data, with proper enterprise controls, rather than the consumer version of a chatbot.

What didn’t help in the original post was the absence of any of that. No data warning, no explanation of where your information lives, no distinction between a secure environment and a public one. Skipping that part is the bit that earns the criticism.

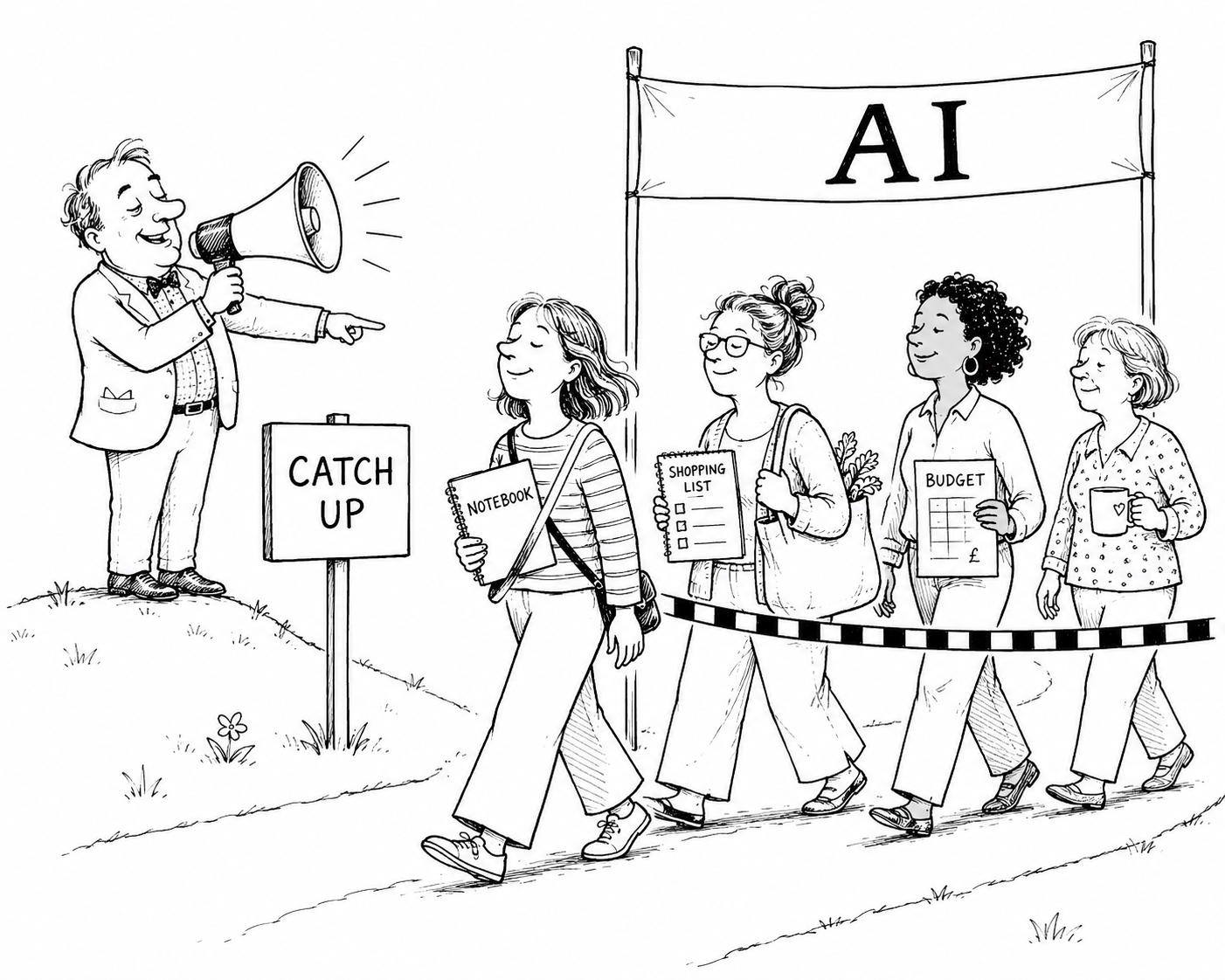

“Women are behind on AI” is doing a lot of heavy lifting

The third point is where the framing aged worst.

Robbins leaned on a Harvard Business School working paper that found women were about 20% less likely than men to use generative AI tools. That paper exists. It’s solid research. And in workplace settings, especially earlier on, that gap was visible.

But quoting that figure as if it’s the whole story paints a picture that’s already out of date.

OpenAI published its first proper consumer usage study in September 2025, drawing on more than 1.5 million messages from over 130,000 ChatGPT users. The headline finding on demographics is striking. The share of users with typically feminine names rose from 37% in January 2024 to 52% by July 2025. On the biggest consumer AI platform in the world, women are now the majority of users.

The same study is interesting on use cases. Around 70% of consumer ChatGPT use is non-work. It’s practical guidance, writing, planning, advice, ideas and research. Users with typically feminine names skew slightly more towards writing and practical guidance. Users with typically masculine names skew slightly more towards technical help and information lookup.

That’s adoption looking very normal. People reaching for what’s useful in their actual lives. AI for parenting, cooking, garden plans, holidays, health questions, family budgets, study, side projects and quietly upgrading their own writing in the meantime.

So when an influencer post leans on a 20% workplace gap as a reason for women to catch up, the more honest version of that sentence is: millions of women are already there, and they’ve been there for a while.

There’s a sharper version of this point too, and it came from Dr Jen Gunter in her response to the original post. Her line was that women’s lower AI usage in some surveys may be a self-protection mechanism, given that the underlying systems are trained on data shaped by social inequality. Whether you fully buy that or not, the instinct is worth taking seriously. A pause before uploading the most intimate details of your finances into a black box is a reasonable instinct, often a competent one, and it shouldn’t be coded as falling behind.

Her closing line is the one I keep coming back to: “Do not confuse using a tool with using it appropriately.” That’s the whole conversation in one sentence.

What good AI advice with money would have sounded like

If you wanted to encourage anyone, of any gender, to use AI sensibly with their money, you’d land somewhere closer to this.

Use AI to explain your statement, translate the jargon and surface the questions you should be asking. Ask it to draft questions for your accountant or independent financial adviser before you sit down with them. Ask it to compare two pension options at a high level, or to walk you through compound interest with examples that match your situation. Get it to turn a messy month of receipts into categories you can see, while you keep the original documents on your own machine. Build a habit of a monthly money check-in and let AI help you think, rather than asking it to hold the file.

That sort of advice takes more than a hashtag. It also doesn’t sell a sponsor, which is, I suspect, why we got the version we got.

The bigger lesson

The Copilot story is really a story about who gets to set the standard for what good AI use looks like in public.

When the loudest voices are paid voices, when the privacy detail goes missing and the data on adoption is years out of date, the people getting blamed for being slow are usually the ones still paying attention. Their caution is doing the work the disclosure should have done.

If you’re going to teach people how to use AI with their money, start with where the data goes, who can read it and what to anonymise before you upload anything. Start there, and the rest of the advice becomes useful instead of risky.